Artemis Use Cases

Artemis has several use cases that complement the traditional HPC clusters, Discovery and Endeavour.

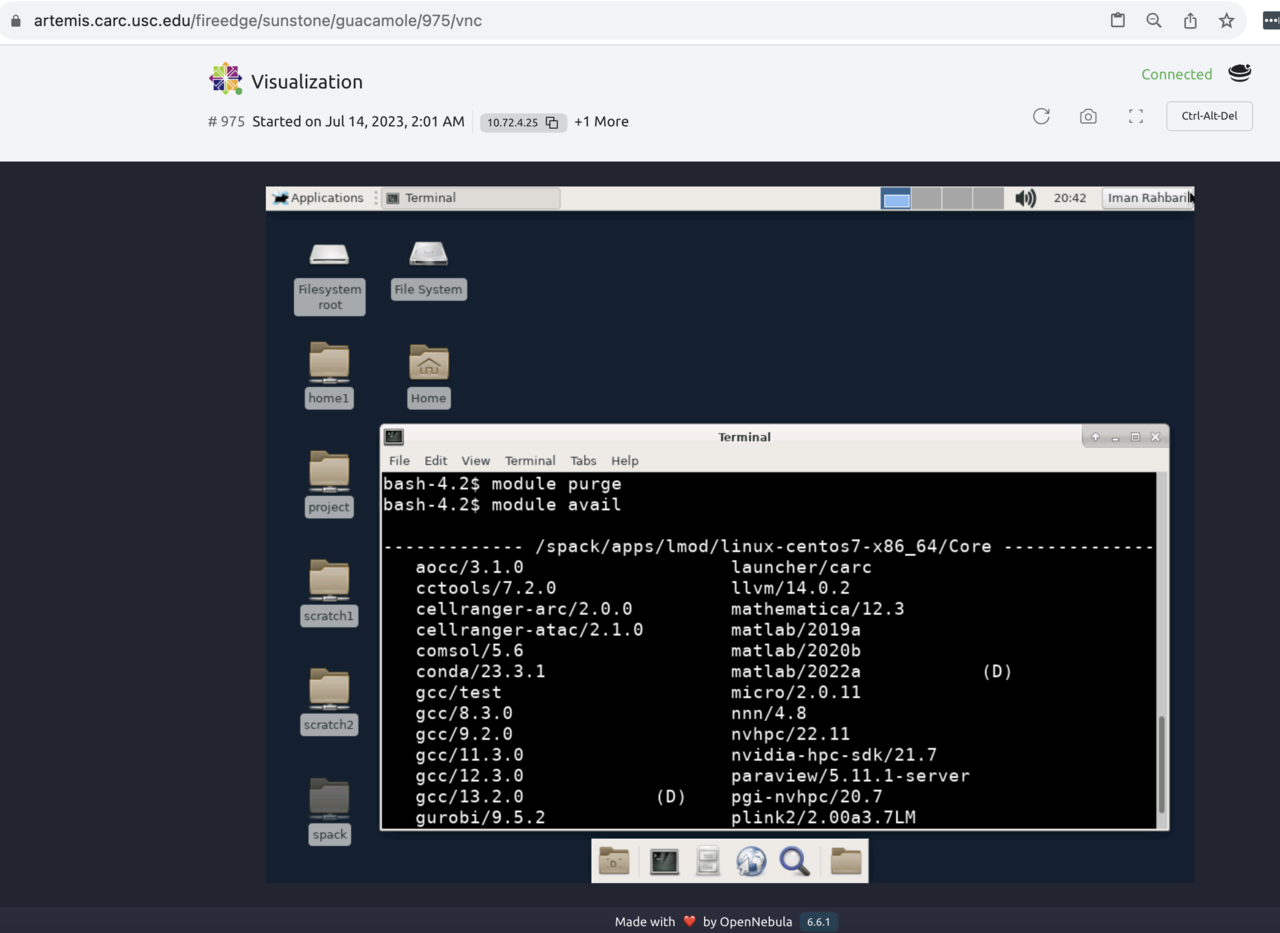

1 Deploying a Virtual Desktop on CARC, Login node analog

One of the most prevalent uses of the Artemis platform is to create a VM identical to the login nodes on Discovery or Endeavour. This VM has access to a graphical user interface (GUI) to build and test-run applications easily. In addition, users can log in to the Discovery or Endeavour clusters to submit jobs to run on more extensive resources.

The login nodes on the HPC clusters are shared among all CARC users. Running processes for an extended time is not allowed.

The Virtual Desktop feature of Artemis is instrumental when installing software packages that involve a graphic installer, like Ansys or StarCCM+, or when installation or testing software takes a long time to complete. There is no need to start an interactive session or submit your job to a SLURM queue. Open up a terminal app inside your VM and run your program.

CARC currently does not offer GPUs in VMs, so CUDA accelerated code will not work.

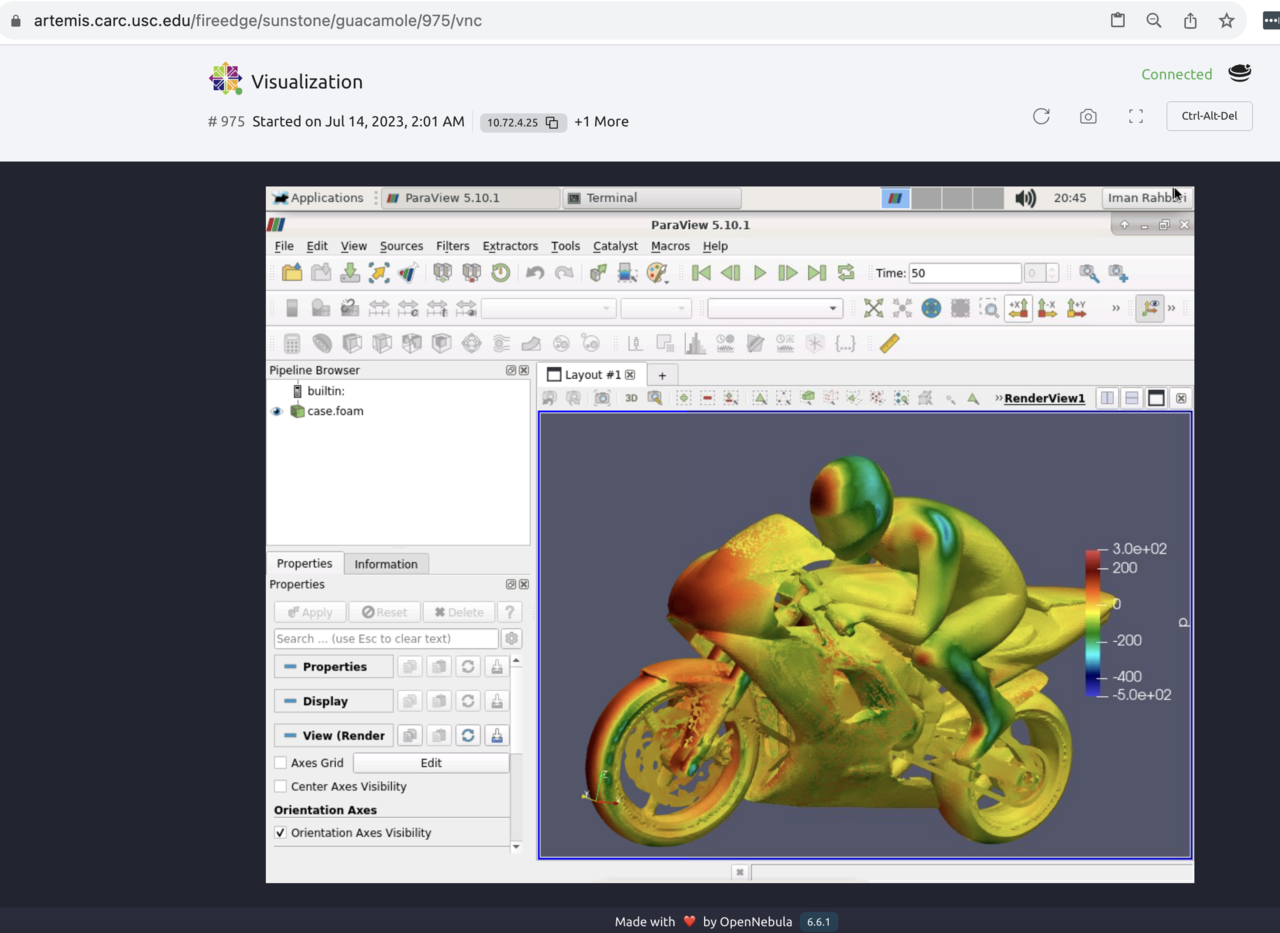

2 Visualization and postprocessing

Postprocessing simulation results on traditional HPC systems can be challenging, mainly due to two factors:

- Disabled X-11 forwarding on the compute nodes. This requires transferring a large amount of data to local workstations for visualization purposes.

- Inadequacy of parallel file systems when working with many small files. This is due to the significant metadata overhead and increased file system locking, leading to performance degradation and inefficient disk space utilization.

Artemis solves both problems:

- First, by providing GUI access to the VMs that have a direct high-speed connection to the parallel file systems, therefore eliminating the need to transfer the data over to lab workstations.

- Second, Artemis VMs have a high-speed SSD-based file system. Users can zip small data, transfer it to Artemis storage, and then run the postprocessing scripts instead of using the parallel file systems.